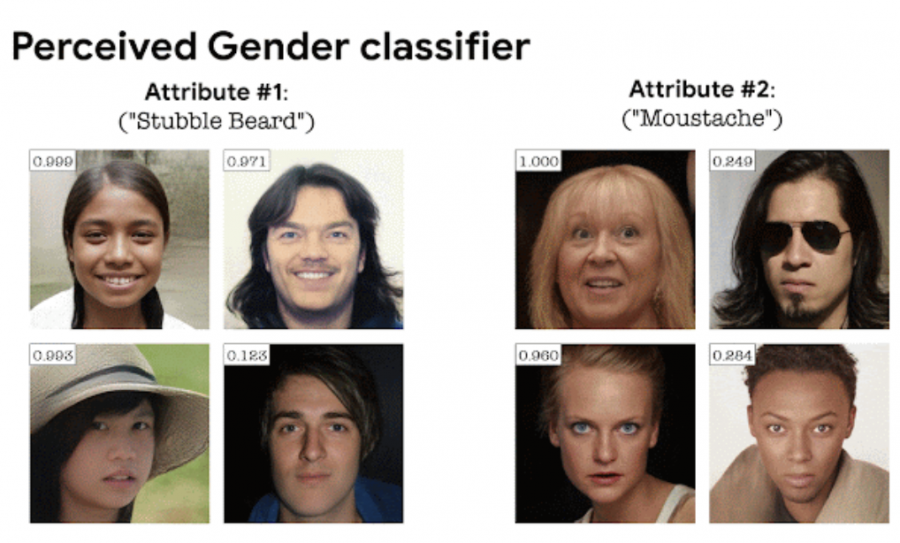

StylEx: Key Classifiers Attributes Visualization

Google has introduced StylEx, a tool for highlighting attributes that affect image classifiers. StylEx allows you to explain the decision-making process with a classifier and find errors in models.

Determining which features in an image cause the model to determine that an object belongs to one class and not another is one of the key problems in the field of neural networks. Explaining the decision-making process is important in the tasks of analyzing medical images and unmanned driving, as well as for detecting errors in models.

Existing approaches to solving this problem, such as attention maps (Grad-CAM), show which areas of the image affect classification, but they do not explain which attributes in these areas determine the result of classification, for example, their color or shape. Another family of methods provides an explanation by smoothly converting the image between different classes (e.g. GANalyze). However, these methods tend to change all attributes at the same time, which makes it difficult to isolate individual attributes.

StylEx automatically detects and visualizes attributes that affect the classifier. This allows you to explore the influence of individual attributes by manipulating these attributes individually. StylEx is applicable to a wide range of fields, including the classification of animals, plants and human faces. StylEx finds attributes that are consistent with semantic attributes and are well interpreted for specific models.

For example, to understand how the classifier of dogs and cats works, Stylex can visualize how manipulating each attribute affects the output result of the classifier. So, from the figure above, the following conclusions can be drawn: “dogs have their mouths open more often than cats” (attribute 4), “cats’ eyes are narrower” (attribute 5)“ “cats’ ears stick out more often than dogs” (attribute 1) and so on.