A group of researchers from the University of Edinburgh has trained a neural network to animate game characters. In their paper named “Neural State Machine for Character-Scene Interactions”, they propose a new deep learning framework for modeling character-scene interactions.

Having a large number of 3D games with highly complex and large environments (for example open-world games), animating characters is a challenging task. The vast complexity of the game worlds enables a large amount of non-trivial interactions between the characters and the environment. Animating these characters of modeling the interactions manually is a very cumbersome and time-consuming task. For this reason, the group of researchers has proposed a method that can actually learn them from data.

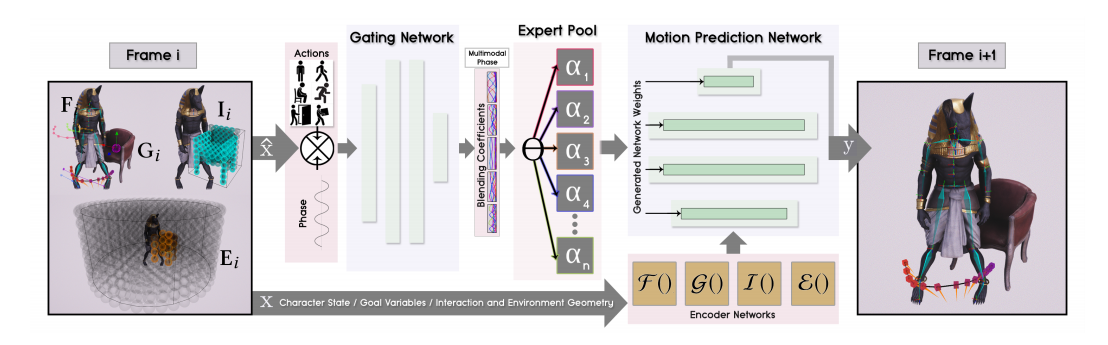

The method works by taking high-level instructions such as goal location in the game and the action to be performed and it produces a sequence of steps (movements and transitions) to reach the requested state. The architecture of the method is composed of two sub-networks: a gating network and a motion prediction network.

A subset of parameters of the current state and goal are consumed by the gating network in order to produce “blending coefficients” (as generated network weights) which are used to generate the second – motion prediction network. This network then takes the posture and parameters from the previous frame and predicts their evolution i.e. their state in the current frame. In this way, a “neural state machine” is constructed that should hypothetically learn different modes of motion in drastically different tasks.

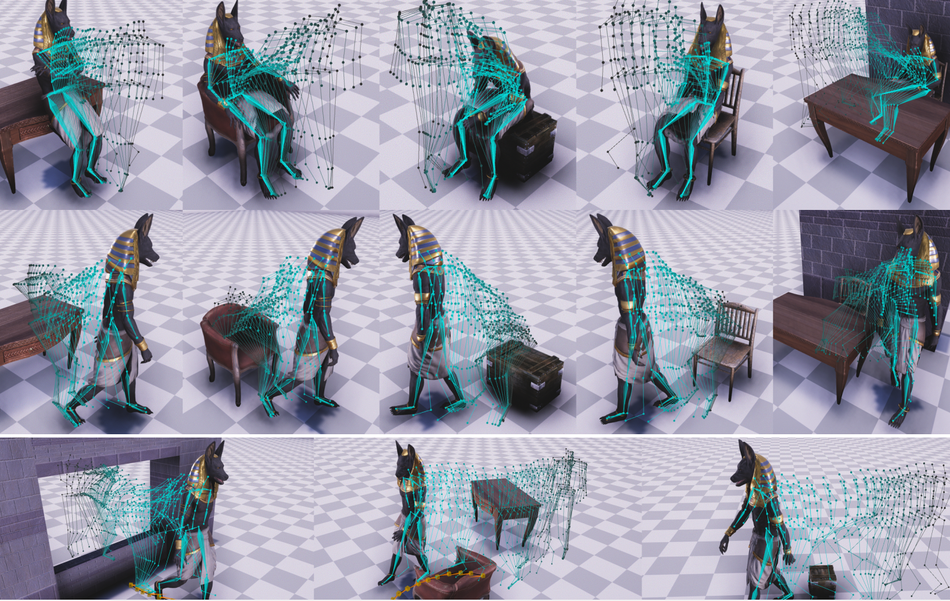

Researchers evaluated the proposed method and demonstrated it’s the capability to learn character-scene interactions directly from data. They tested the model against tasks such as sitting on a chair, avoiding obstacles, opening a door, picking up and carrying objects, etc. According to them, the model was able to handle both cyclic and acyclic movements and can be used applied in real-time applications.

More details about the proposed method can be found in the paper. The implementation was open-sourced and can be found here.