Google AI has introduced a benchmark for graph neural networks GraphWorld. The benchmark uses several million synthetic datasets reproducing a wide class of graphs and generates a generalized estimate of the neural network based on its testing on all datasets.

Graph neural networks (GNNs) are powerful machine learning models for graphs that use their inherent connections to include context in predictions about elements within a graph or the graph as a whole. They are effectively used for finding new drugs, proving theorems, detecting misinformation, and many other tasks.

The surge of interest in GNN over the past decade has led to the emergence of thousands of variants of GNN. However, much less attention is paid to methods and datasets for evaluating GNN. Many articles on GNN reuse the same 5-10 reference datasets, most of which are built from easily labeled academic citation networks and molecular datasets. This means that the performance of the new GNN variants can only be tested for a limited class of graphs.

While the GNN reference datasets presented in the literature are just individual types of graphs, GraphWorld directly generates all their diversity using probabilistic models, tests GNN models on each of the types and extracts generalized results from the results.

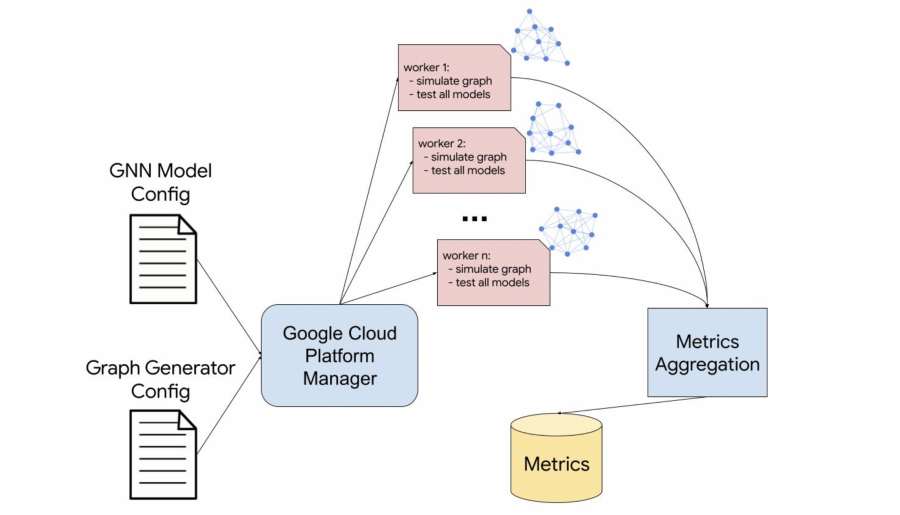

To run GraphWorld, the user configures a parameterized dataset generator and downloads the model files that he wants to test. GraphWorld initializes processes, each of which models a new graph with different properties and tests all provided models on it. Then metrics from each process are aggregated and provided to the user.