ArtFlow is a framework for transferring lossless image style using reversible neural flows. The open-source code is on Github.

Why is it needed

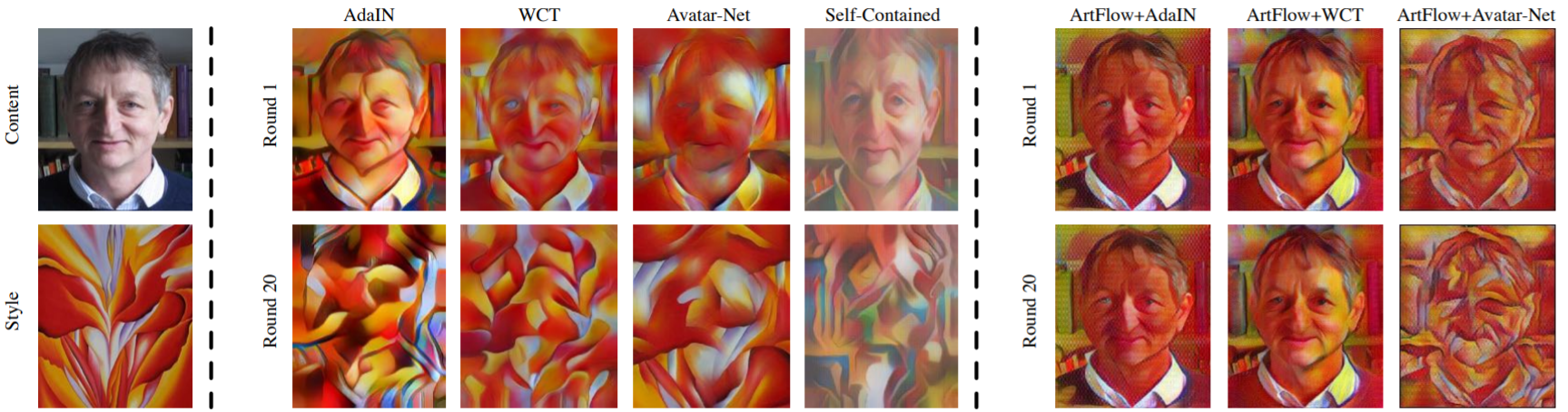

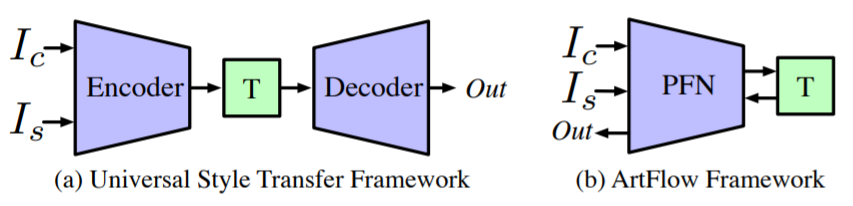

The Universal Style Transfer (UST) task allows applying picture style to another one. For example, you can make a photo look like a drawing by a famous artist. Modern UST frameworks such as AdaIN, WCT, and Avatar-Net allow for content loss of the original image. This means that the stylization process gradually erases the objects’ outlines, making the picture less and less recognizable. The described method eliminates the content loss problem and makes the style transfer reversible.

What is innovation

- The authors identified root causes of content loss in AdaIN, WCT, and Avatar-Net.

- They proposed a lossless reversible neural network called PFN based on neural flows.

- Based on PFN, they proposed a new method ArtFlow, provided as a library on PyTorch. The results of the method are comparable to modern UST frameworks but do not lead to image content loss.

How it works

ArtFlow contains only a Projection Flow Network (PFN), in which each data transformation is reversible. Specifically, PFN implements chains consisting of three types of transformations:

- additive coupling;

- convolution 1 × 1;

- Actnorm (activation normalization layer).

Due to the reversibility of each of them, there’s no information loss both during the direct and during the reverse training pass.

Besides, the authors found that the style transfer modules of AdaIN and WCT do not introduce any losses. They became a part of ArtFlow to help achieve a clean style transfer.