Israeli researchers have presented a model that generates makeup to bypass facial recognition systems. After applying cosmetics in accordance with the patterns presented by the neural network, the faces of the experiment participants were recognized only in 1.22% of cases.

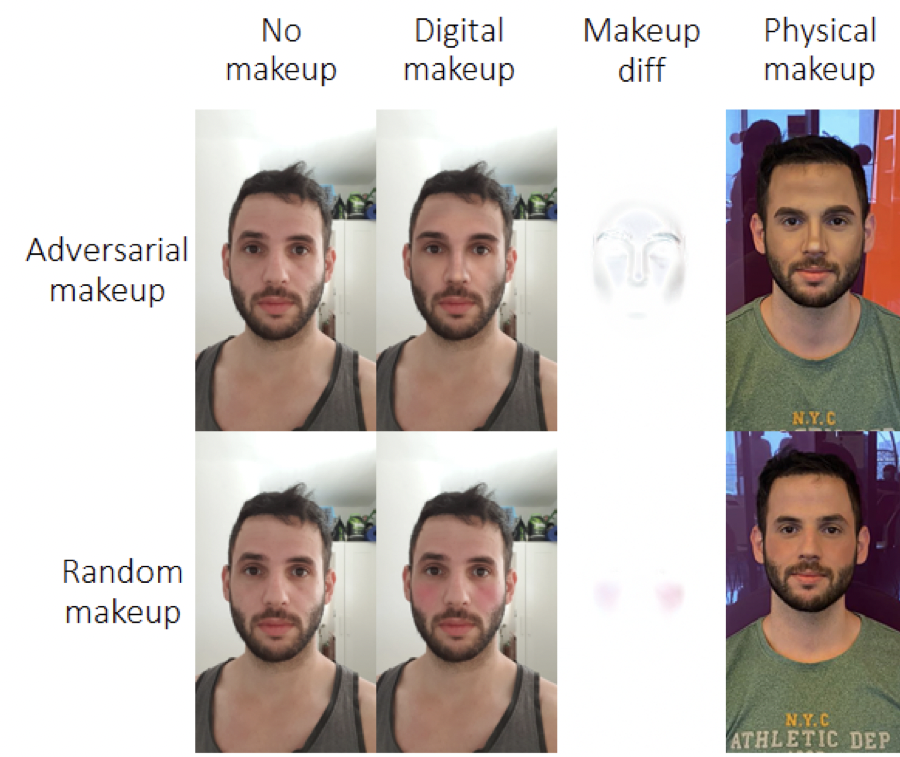

The generative-adversarial neural network worked as follows. First, she uses the image of the volunteer to determine the areas of the face that have the greatest influence on the decision about identification by the facial recognition model. Then synthetic makeup is applied to these areas,. These two steps are repeated until the facial recognition model can correctly identify the attacker.

During the testing of the model, twenty volunteers (10 men and 10 women) either applied makeup in accordance with the heat map generated by the neural network, or applied random makeup or did not apply it at all. Then the volunteers walked along a corridor equipped with two cameras located in different lighting conditions.

Participants who used makeup generated by the model were correctly identified in only 1.22% of cases, compared to 33.73% of cases when using random makeup and 47.57% of cases when makeup was absent. Curiously, the makeup artist did not try to completely repeat the heat map of makeup, but, nevertheless, the method still worked.

The study was conducted using neutral color palettes to achieve a natural appearance and traditional makeup techniques, which means that technically anyone can reproduce it.