Apple has introduced Hypersim, a synthetic dataset of photorealistic images of rooms and interiors. Hypersim consists of 77,400 images of 461 scenes and provides semantic segmentation.

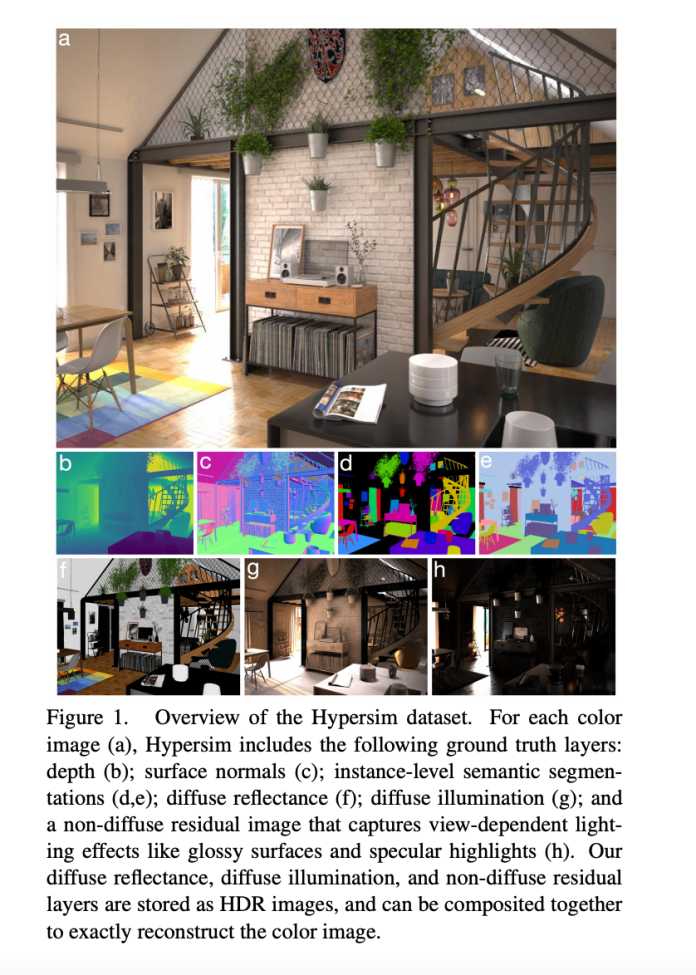

The main limitation of synthetic datasets is the lack of semantic segmentation in the data-grouping pixels into separate objects. Also, in most of these datasets, the decomposition of images into separate lighting and shadow components is not provided, which makes them unsuitable for solving inverse rendering problems. Hypersim solves both problems: the dataset includes the full geometry of the scene, information about surface materials and lighting, as well as semantic segmentation for each pixel.

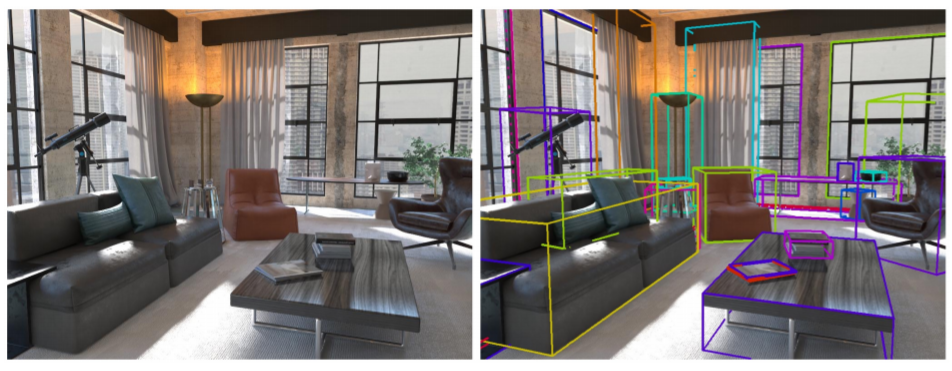

The boundaries of each object are set by a parallelepiped, which allows you to use the dataset in object recognition tasks:

Hypersim is created on the basis of publicly available three-dimensional renderers. For each image, its decomposition into three components is provided: a part with diffuse reflection, a part with diffuse illumination and a non-diffusive residual part containing lighting effects that depend on the viewing angle.

The dataset and the code for its generation are published in the public domain.