Researchers from Adobe Research and the University of California Berkeley, have developed a method that can detect photoshopped faces by scripting photoshop.

In the past few years, Adobe Research has focused more on image manipulation detection and this work is a successful continuation of that research. In the novel method, proposed in the paper named “Detecting Photoshopped Faces by Scripting Photoshop”, researchers used deep neural networks to detect malicious photo content generated with Photoshop.

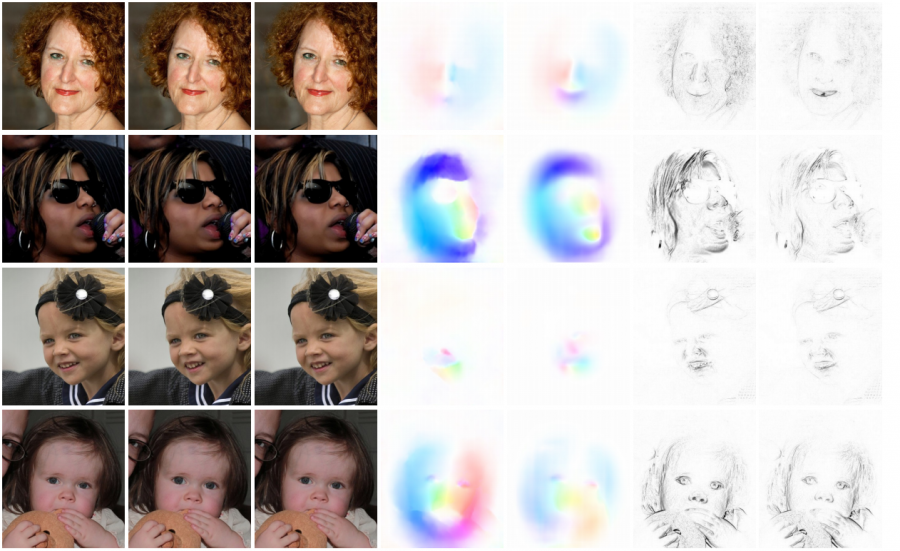

The method is based on training a neural network model using fake images that were generated by scripting Photoshop. The focus of the method is on image warping applied to human face images as a popular image manipulation done with Photoshop. Researchers wanted to show that the approach will be better than humans in detecting face manipulations.

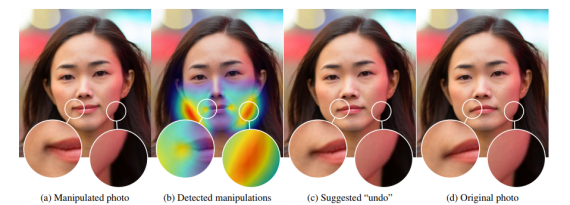

“We started by showing image pairs (an original and an alteration) to people who knew that one of the faces was altered,” … “For this approach to be useful, it should be able to perform significantly better than the human eye at identifying edited faces.”, says one of Adobe’s researchers – Oliver Wang.

“Those human eyes were able to judge the altered face 53% of the time, a little better than chance. But in a series of experiments, the neural network tool achieved results as high as 99%.“.

The experiments showed that the method overperforms humans by a large margin in the task of face manipulation detection. Moreover, researchers mention that in some cases the method is able to predict the exact locations of edits and even “undo” some of them.

More about the neural network model and the experiments can be read in the official pre-print paper. The implementation of the method was open-sourced and the code is available here.