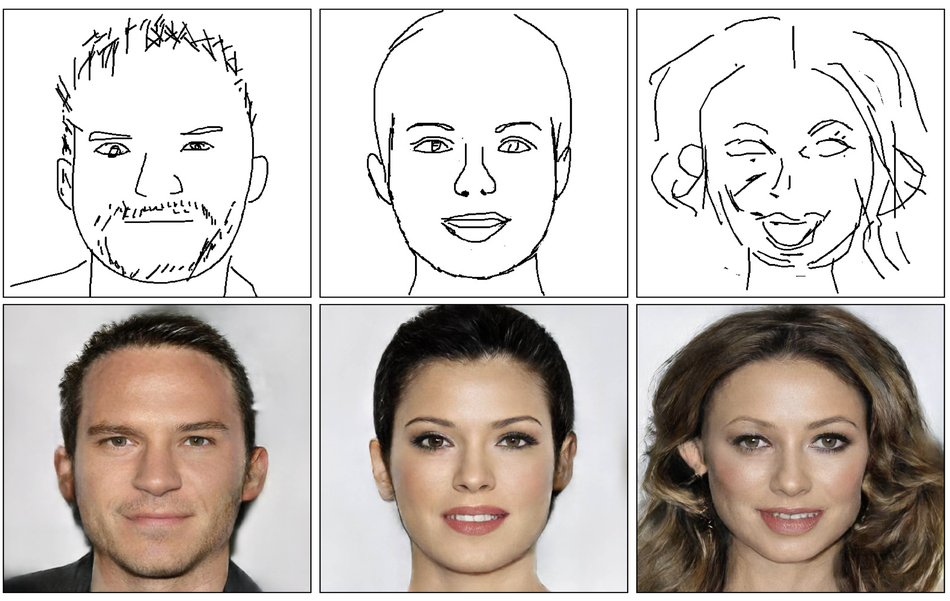

DeepFaceDrawing – a new method proposed by researchers from the University of Chinese Academy of Sciences is able to generate realistic face images from freehand sketches.

The method leverages the power of deep generative neural networks to learn a conditional distribution over human faces. In their paper, named “DeepFaceDrawing: Deep Generation of Face Images from Sketches”, researchers propose a novel approach to image-to-image translation problems, specifically designed to address the translation from sketch to real face image.

The proposed method consists of several modules: a component embedding module, a feature mapping module, and an image synthesis module. The first module is responsible for learning embeddings of face components using individual auto-encoders. The feature mapping module (FM) decodes the per-component features extracted from the previous stage. The combined output feature maps spatially located are passed to the last module of image synthesis (IS) which performs the final reconstruction.

In order to properly train and evaluate the proposed method, researchers collected their own dataset of image-sketch pairs, based on the popular CelebAMask-HQ dataset. The evaluations showed that the method is superior compared to existing solutions. Part of the evaluation was also a user study where subjective perceptive quality was measured.

More details about DeepFaceDrawing can be read in the published paper. Researchers developed a system that showcases their model and it can be accessed here. The code will be released soon according to the official project page.

Hi

His first time

Si

I farted on my poop

im sorry what did you just say?

i shatted on my cum

boiiii

the fluck boiiiiiiiiiiiiiiiiii

i eat deez nuts

i just shat my pants