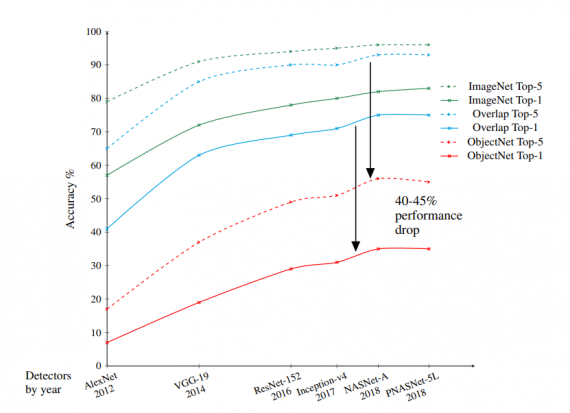

A group of researchers from MIT and IBM has developed and released a new vision dataset called ObjectNet, which causes 40-45% of accuracy drop of object detectors.

The new dataset is in fact, a different dataset from the ones commonly encountered in the machine learning community. ObjectNet is a test dataset that is built with the purpose of challenging vision systems and specifically object detectors.

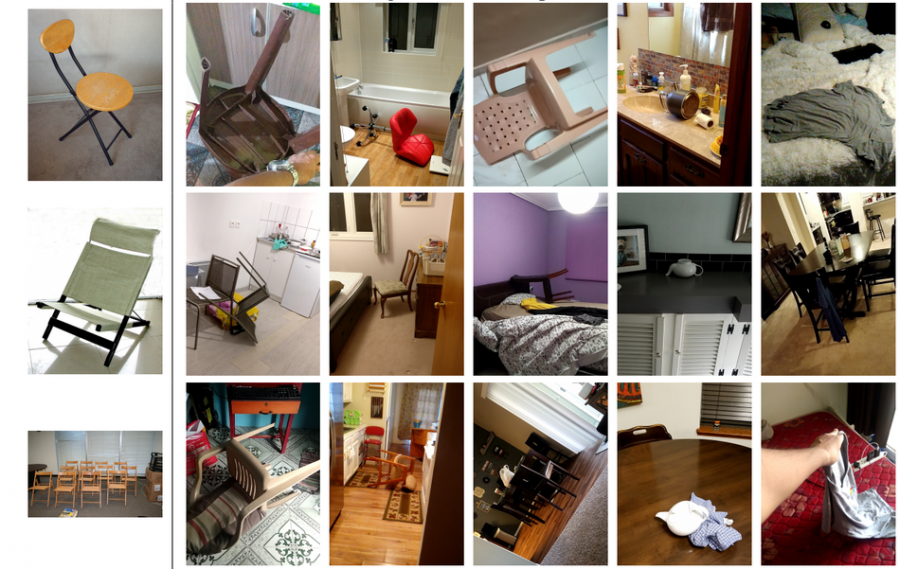

In the paper that describes the dataset, researchers mention that their goal was to develop a dataset that will ensure that models cannot exploit trivial correlations in the data. For this reason, they created ObjectNet – a large-scale, real-world dataset of images, where object backgrounds, rotations, and viewpoints are random.

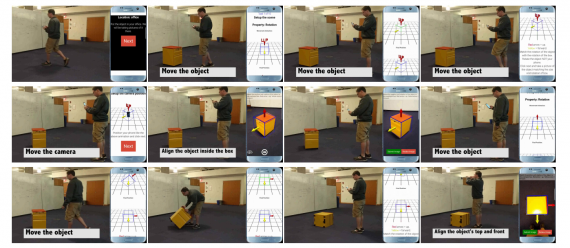

Researchers first developed a highly automated platform that allows for data gathering with controls by crowdsourcing image capturing and annotation. Using this platform, they collected a final dataset of 50 000 images, which is the same size as the ImageNet test set. The similarity with ImageNet comes only in the number of images, whereas the new dataset largely differs from ImageNet in two other ways: it is easier in the sense of having mostly centered and unoccluded objects and it is harder than ImageNet due to the controls of backgrounds, rotations, and viewpoints.

ObjectNet has 313 classes out of which it shares 113 with the ImageNet class set. Researchers conducted various experiments to prove their hypothesis that large machine learning datasets lack controls and therefore models cannot generalize well. They showed that current object detection methods exhibit 40-45% of performance drop on ObjectNet as compared to other benchmark datasets.

The dataset can be obtained by applying here and will soon be freely available for download. More details about the data collection process, the dataset statistics, and the evaluations can be found in the paper.