In a recent paper, researchers from the University of Freiburg, Germany, have proposed a new state-of-the-art CNN model for panoptic segmentation which also outperforms all existing models in terms of efficiency.

Named after its capabilities (EfficientPS), the new model’s architecture is built upon the popular Mask R-CNN and EfficientNet, which have achieved great results in the past, the former in the task of semantic segmentation, the latter in the task of efficient feature extraction. Researchers have designed a specific architecture that is supposed to be more computationally efficient while at the same time be able to capture fine features and long-range context. The final proposed model uses an augmented EfficientNet as a shared backbone between the three heads: a 2-way FPN, parallel semantic segmentation head, and instance segmentation head. The last module of the architecture is a panoptic fusion module which takes the output from the different heads and produces the final panoptic segmentation mask.

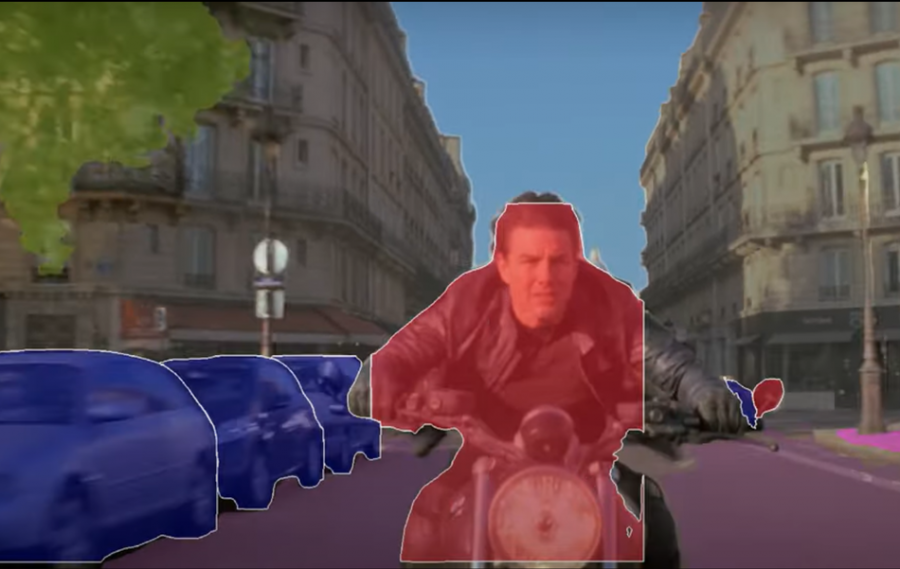

In order to train the proposed model, researchers have built a novel dataset – KITTI panoptic segmentation dataset which provides panoptic segmentation labels for images already present in the KITTI segmentation datasets. In addition to the experiments with the new dataset, researchers conducted extensive experiments on popular benchmarks such as Cityscapes, Mapillary Vistas, IDD (the Indian Driving Dataset), etc. They showed that the proposed EfficientPS model achieves state-of-the-art results on all four benchmark datasets and that it is more computationally efficient than existing models. Different ablation studies were also performed in order to show the contribution of each of the components and different architectural decisions on the final outcome.

The implementation of EfficientPS is open-sourced and can be found here. More in detail about the architecture, the new dataset, and the conducted experiments can be read in the paper published on arxiv. A live demo of the method is available on the project’s website where users can select different models and see the performance of EfficientPS on sample images.