Researchers from Samsung AI and the Skolkovo Institute of Science and Technology have published a paper where they propose a new state-of-the-art method for human pose tracking.

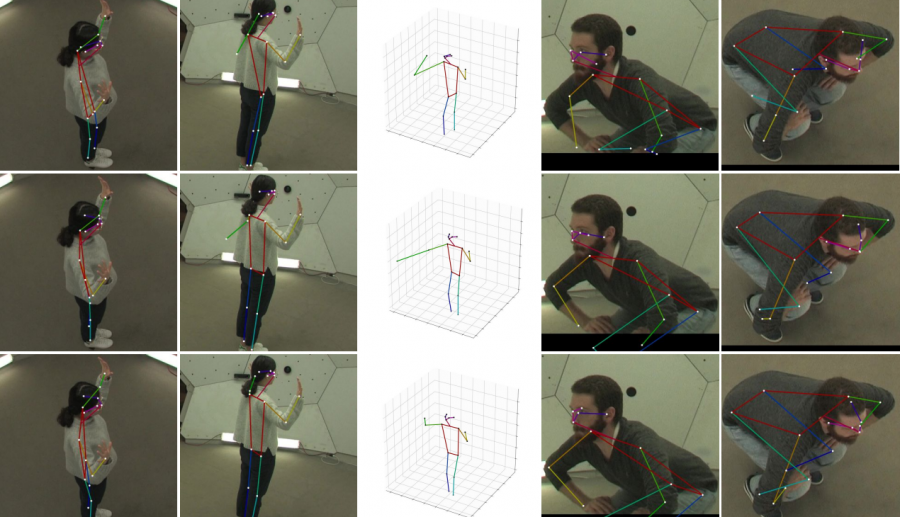

In the paper, named “Learnable Triangulation of Human Pose” researchers propose two solutions for the problem of human pose estimation. In both of the proposed solutions, the method tries to combine 3D information from multiple 2D views to perform accurate 3D pose estimation.

The first configuration or proposed solution is based on the concept of algebraic triangulation, and the second one is based on volume triangulation.

Algebraic Triangulation

In the first proposed approach researchers tried to extract interpretable key-points on the human body. The architecture consists of a backbone network (which in this case was ResNet) that extracts heatmaps which are then mapped to joint positions by using a softmax.

Volumetric Triangulation

In the second solution, original frames go again through ResNet feature extractor network and some convolutional and projection layers. The volumetric triangulation part includes aggregating 3D volumes and soft-max activation before producing the final output of key points.

The evaluations showed that the proposed method achieves the multi-view state of the art on the Human3.6M dataset. However, the method relies on an assumption that the video streams coming from different cameras are synchronized.

The implementation of the proposed method, together with video demonstrations is available here. The paper was published in arxiv and it is available as a pre-print paper.