A group of researchers from the University of California has developed a new instance segmentation method that works in real-time.

The method, called YOLACT++ was inspired by the well-performing and wide known method for object detection YOLO, which actually provides fast and real-time object detection. As opposed to object detection, most of the methods for semantic or instance segmentation have focused on performance over speed. For this reason, researchers designed and proposed YOLACT which borrows some ideas from YOLO to make real-time instance segmentation possible.

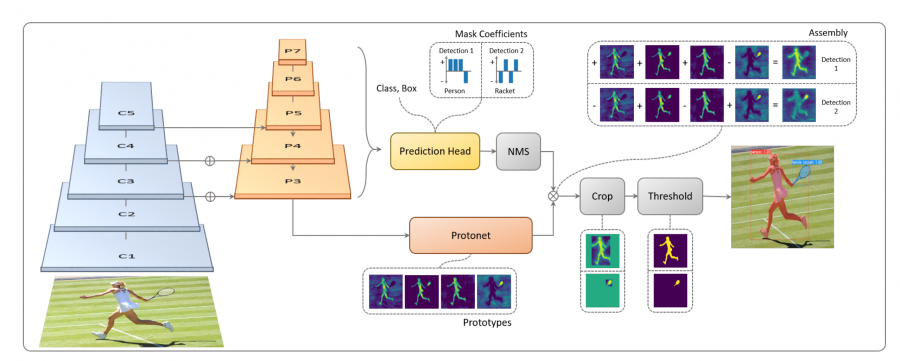

The way YOLACT addresses the problem of instance segmentation is by breaking the task into two smaller tasks that run in parallel: generating a dictionary of prototype masks and predicting a set of linear combination coefficients per instance. In this way, the method implicitly learns to localize the instance masks and therefore it is able to skip the localization step that is very common in instance segmentation methods.

In addition to this, researchers introduced several other improvements that improve performance or inference time in one way or another. They propose a new variant of standard non-maximum suppression (NMS) called Fast-NMS. Also, they introduce deformable convolutions in the encoder’s backbone network and a novel mask re-scoring branch. The whole architecture of YOLACT++ is depicted in the diagram below.

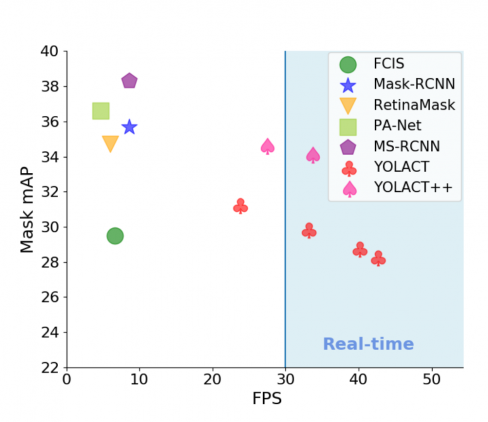

The proposed method was evaluated against two common datasets: MS COCO and Pascal 2012. The comparisons with existing state-of-the-art methods show that YOLACT++ achieves almost 4 times faster inference than other methods while retaining competitive segmentation performance.

Researchers opens-sourced the implementation of YOLACT++ and the code is available on Github. More details about the network architecture and the evaluations can be found in the pre-print paper published on arxiv.