Google introduced TensorFlow Datasets – a collection of public, open-source datasets ready to use with TensorFlow.

TensorFlow was lagging behind Pytorch when it comes to ease of data loading. Pytorch was a clear winner in this part having well-designed data loading interfaces and (some) public datasets exposed for direct use through them.

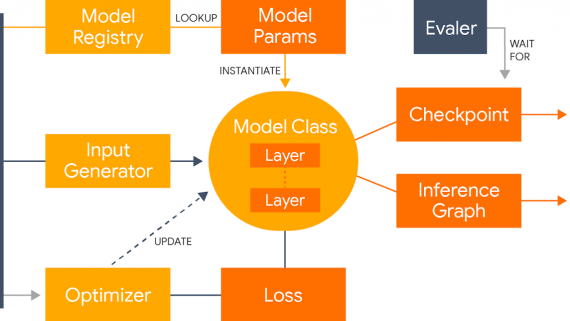

Now, in the new TensorFlow 2.0, one of the major improvements is data loading besides making Keras the central, high-level API for model building. TensorFlow Datasets will enable researchers and deep learning practitioners to load data with ease. It will provide common interfaces for loading data and it will expose the most popular, public datasets. Datasets in TensorFlow Datasets can be exposed as tf.data.Datasets (a core dataset class) or as NumPy arrays.

TensorFlow Datasets will enable faster development by saving a significant amount of time spent on data retrieval and loading. It provides functionality for fetching source data, preparing the data in common format on this and it uses the tf.data API to build high-performance input pipelines.

Together with TF Datasets, 29 public research datasets were launched including CIFAR, ImageNet2012, MNIST, Street View House Numbers, the 1 Billion Word Language Model Benchmark, and the Large Movie Reviews Dataset. Tensorflow also released guidelines on adding a dataset. Users are encouraged to add their datasets to TensorFlow Datasets and make the repository of available datasets larger. More about TensorFlow Datasets can be read in the official blog post.