The clustering of images seems to be a well-researched topic. But in fact, little work has been done to adapt it to the end-to-end training of visual features on large-scale datasets.

The existence and usefulness of ImageNet, a fully-supervised dataset, have contributed to the pre-training of convolutional neural networks. However, ImageNet is not so large by today’s standards: it “only” contains a million images. Now we need to move to the next level and build a bigger and more diverse dataset, potentially consisting of billions of images.

No Supervision Required

Can you imagine the number of manual annotations required for this kind of dataset? This is huge! Replacing labels by raw metadata is also a wrong solution as this leads to biases in the visual representations with unpredictable consequences.

So, it looks like we need methods that can be trained on internet-scale datasets with no supervision. That’s precisely what a Facebook AI Research team suggests. DeepCluster is a novel clustering approach for the large-scale end-to-end training of convolutional neural networks.

The authors of this method claim that the resulting model outperforms the current state of the art by a significant margin on all the standard benchmarks. But let’s first discover the previous works in this research area.

Previous Works

All the related work can be arranged into three groups:

- Unsupervised learning of features: for example, Yang et al. iteratively learn Сonvnet features and clusters with a recurrent framework, Bojanowski and Joulin learn visual features on a large dataset with a loss that attempts to preserve the information flowing through the network.

- Self-supervised learning: for instance, Doersch et al. use the prediction of the relative position of patches in an image as a pretext task, Noroozi and Favaro train a network to rearrange shuffled patches spatially. These approaches are usually domain dependent.

- Generative models: for example, Donahue et al. and Dumoulin et al. have shown that using a GAN with an encoder results in visual features that are pretty much competitive.

State-of-the-art idea

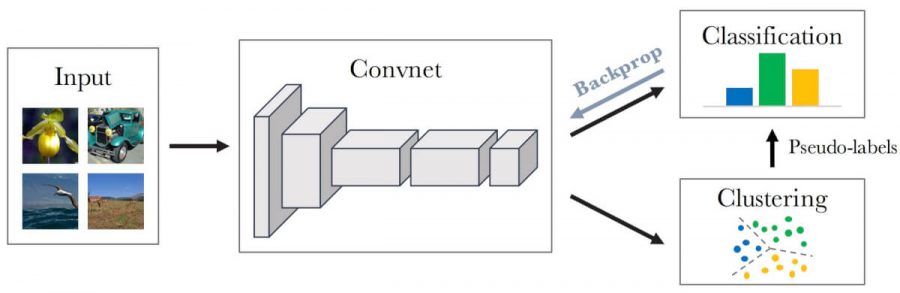

DeepCluster is a clustering method presented recently by a Facebook AI Research team. The method iteratively groups the features with a standard clustering algorithm, k-means, and uses the subsequent assignments as supervision to update the weights of the network. For simplicity, the researchers have focused their study on k-means, but other clustering approaches can also be used, like for instance, Power Iteration Clustering (PIC).

Such an approach has a significant advantage over the self-supervised methods as it doesn’t require specific signals from the output or extended domain knowledge. As we will see later, DeepCluster achieves significantly higher performance than previously published unsupervised methods.

Let’s now have a closer look at the design of this model.

Method overview

The performance of random convolutional networks is intimately tied to their convolutional structure which gives a strong prior on the input signal. The idea of DeepCluster is to exploit this weak signal to bootstrap the discriminative power of a Сonvnet.

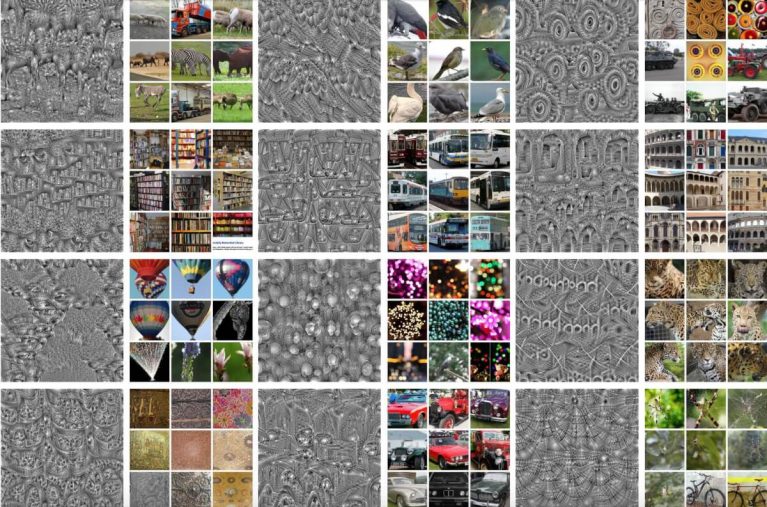

As illustrated below, the method implies iterative clustering of deep features and using the cluster assignments as pseudo-labels to learn the parameters of the Сonvnet.

This type of alternating procedure is prone to trivial solutions, which we’re going to discuss briefly right now:

- Empty clusters. Automatic reassigning of empty clusters solve this problem during the k-means optimization.

- Trivial parametrization. If the vast majority of images is assigned to a few clusters, the parameters will exclusively discriminate between them. The solution to this issue lies in sampling images based on a uniform distribution over the classes, or pseudo-labels.

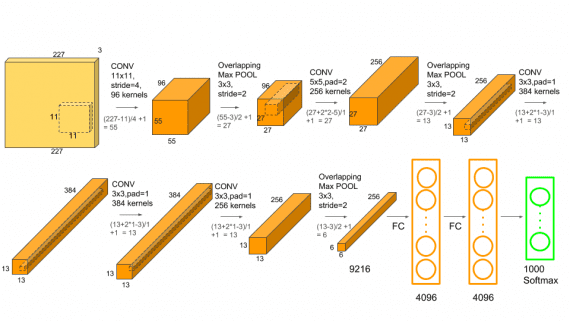

DeepCluster is based on a standard AlexNet architecture with five convolutional layers and three fully connected layers. To remove color and increase local contrast, the researchers apply a fixed linear transformation based on Sobel filters.

So, the model doesn’t look complicated, but let’s check its performance on the ImageNet classification and transfer tasks.

Results

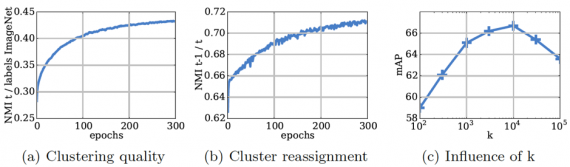

The results of preliminary studies are demonstrated below:

- (a) the evolution of the Normalized Mutual Information (NMI) between the cluster assignments and the ImageNet labels during training;

- (b) the development of the model’s stability along the training epochs;

- (c) the impact of the number of clusters k on the quality of the model (k = 10,000 gives the best performance).

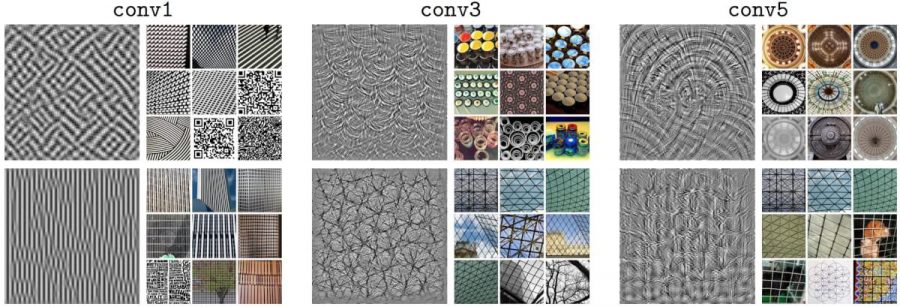

To assess the quality of a target filter, the researchers learn an input image that maximizes its activation. The figure below shows these synthetic filter visualizations and the top 9 activated images from a subset of 1 million images from YFCC100M.

Deeper layers in the network seem to capture larger textural structures. However, it looks like some filters in the last convolutional layers merely replicate the texture already captured in the previous layers.

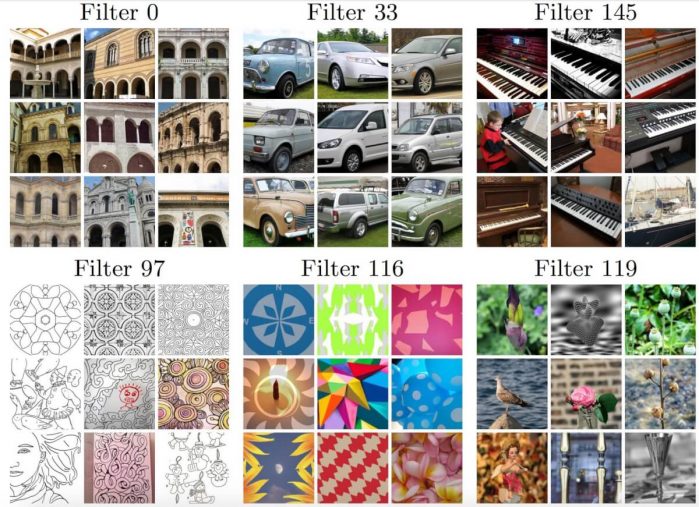

Check below the results from the last convolutional layers but this time using VGG-16 architecture instead of AlexNet.

As you can see, the filters learned without any supervision, can capture quite complex structures.

Next figure shows the top 9 activated images of some filters that seem to be semantically coherent. The filters in the top row reflect the structures that are highly correlated with object class, while the filters in the bottom row seem to trigger on style.

Comparison

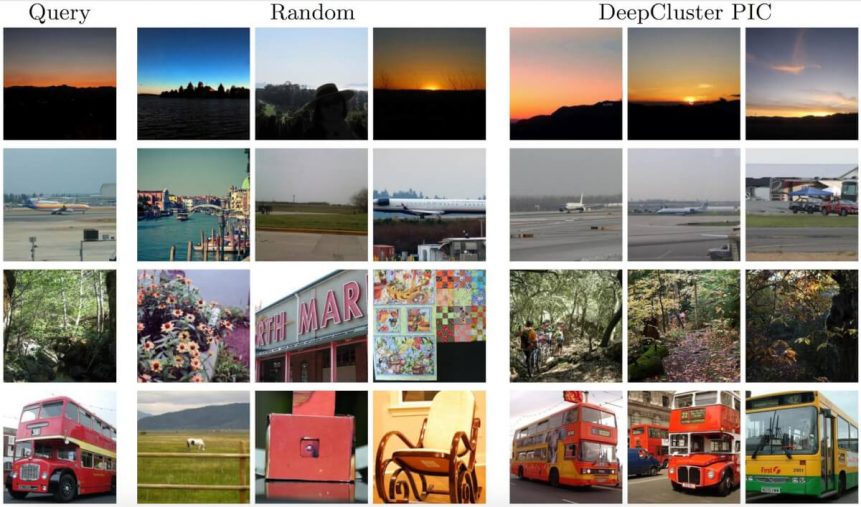

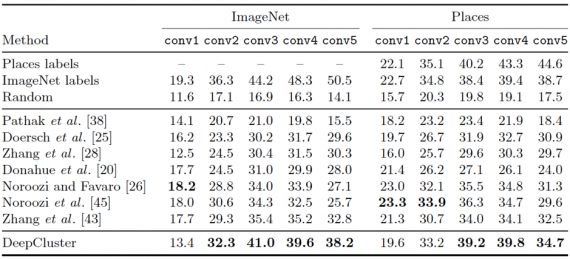

To compare DeepCluster to other methods, the researchers train a linear classifier on top of different frozen convolutional layers. The table below reports the classification accuracy of different state-of-the-art approaches on the ImageNet and the Places dataset.

On ImageNet, DeepCluster outperforms state of the art from conv2 to conv5 layers by 1-6%. Poor performance in the first layer is probably due to the Sobel filtering discarding color. Remarkably, the difference of performance between DeepCluster and a supervised AlexNet is only around 4% at conv2-conv3 layers, but rises to 12.3% at conv5, showing where the AlexNet stores most of the class level information.

The same experiment on the Places dataset reveals that DeepCluster yields conv3-conv4 features that are comparable to those trained with the ImageNet labels. This implies that when the target task is sufficiently far from the domain covered by the ImageNet, labels are less important.

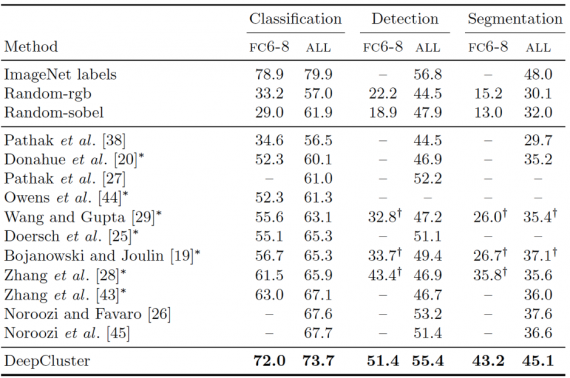

The next table summarizes the comparisons of DeepCluster with other feature learning approaches on the three tasks: classification, detection, and semantic segmentation. As you can see, DeepCluster outperforms all previous unsupervised methods on all three tasks with the most substantial improvement in semantic segmentation.

Bottom Line

DeepCluster proposed by the Facebook AI Research team achieves performance that is significantly better than the previous state of the art on every standard transfer task. What is more, when tested on the Pascal VOC 2007 object detection task with fine-tuning, DeepCluster is only 1.4% below the supervised topline.

This approach makes a little assumption about the inputs and doesn’t require much domain-specific knowledge, which makes it a good candidate for learning deep representations specific domains where labeled datasets are not available.

[…] Quelle […]