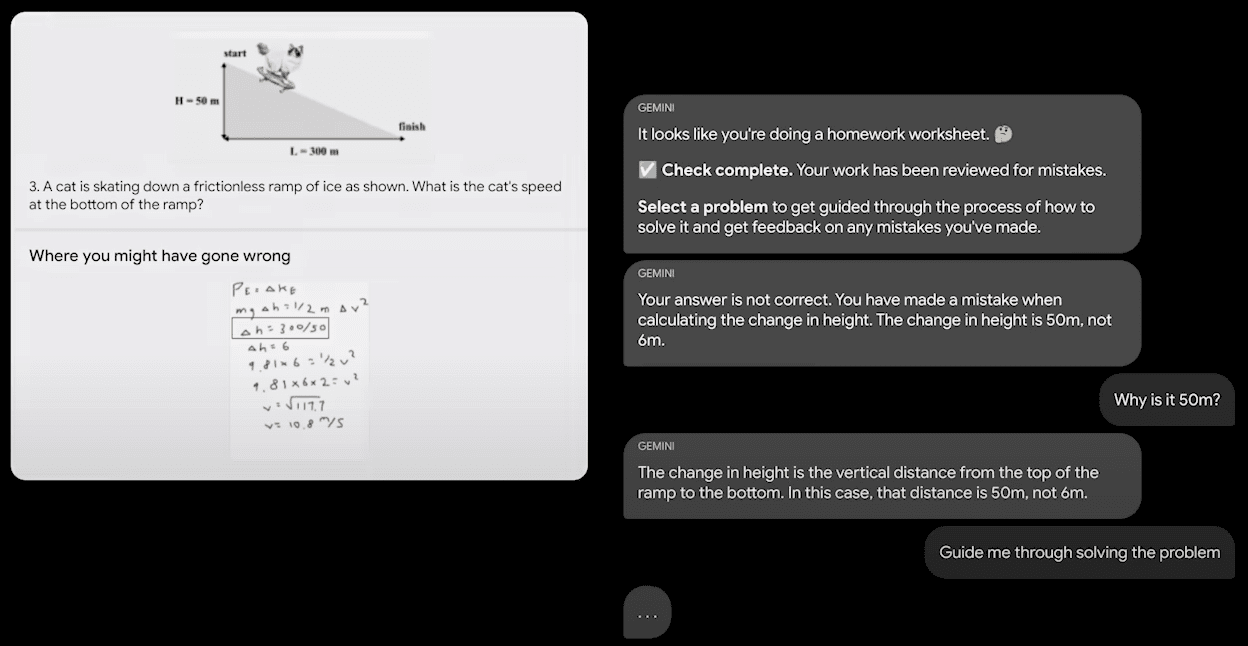

Google has announced the creation of Gemini, a set of three language models surpassing competitors in 30 out of 32 benchmarks. The top-tier model, Gemini Ultra, is available through an API, while the mid-range Gemini Pro will be integrated into various Google products. The entry-level Gemini Nano is designed for mobile devices.

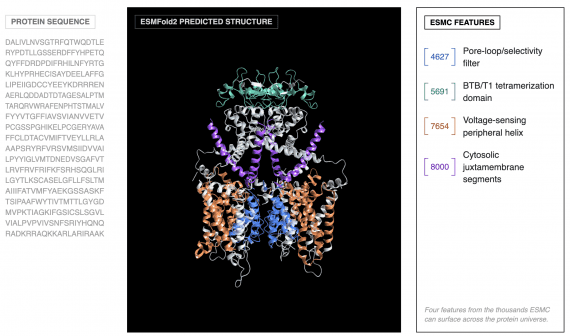

Each model is multimodal, capable of processing text, code, audio, images, and video. Gemini Ultra outperforms previous state-of-the-art benchmarks in 30 out of 32 tests commonly used in large language model research.

Gemini Ultra achieved a milestone by surpassing human experts in the Massive Multitask Language Understanding benchmark, covering 57 topics such as mathematics, physics, history, law, medicine, and ethics.

Google claims that Gemini can simultaneously analyze data from hundreds of thousands of documents, potentially leading to breakthroughs in science and the economy. Gemini can understand, explain, and generate code in Python, Java, C++, and Go. The model serves as an engine for more advanced coding systems, including the creation of the AlphaCode 2 code generation system, capable of solving tasks involving complex mathematical algorithms and theoretical computer science.

Bard is already utilizing the mid-range Gemini Pro model for English language queries. The Pixel 8 Pro will be the first device to support Gemini Nano, featuring text summarization and auto-generated responses in messaging apps. By early 2024, Gemini will be integrated into more Google products, including Search, Ads, Chrome, and Duet AI.

Starting from December 2023, developers and corporate clients can access Gemini Pro through the Gemini API in Google AI Studio or Google Cloud Vertex AI. Android developers can also leverage Gemini Nano through AICore, a new system feature available on Android 14, starting with Pixel 8 Pro.

The full report on Gemini’s functionality and test results is available here.