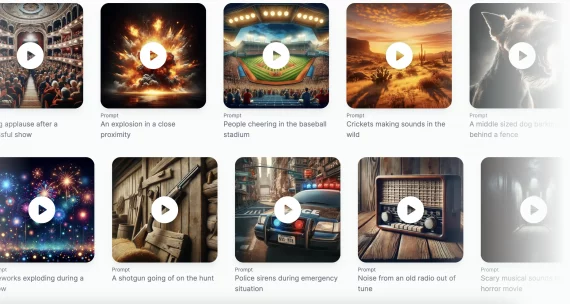

The article provides an overview of music datasets. Datasets are designed to train models of music generation, recognition, and analysis.

NSynth

The largest dataset consists of 305,979 musical notes, including pitch, timbre, and envelope. The dataset includes recordings of 1006 musical instruments from commercial sample libraries and is annotated based on the instruments used (acoustic, electronic, or synthetic) and sound parameters. The dataset contains instruments such as flute, guitar, piano, organ, and others.

https://magenta.tensorflow.org/datasets/nsynth

MAESTRO

MAESTRO (MIDI and Audio Edited for Synchronous Tracks and Organization) contains over 200 hours of annotated recordings of international piano competitions over the past ten years.

https://magenta.tensorflow.org/datasets/maestro

URMP

URMP is a dataset for audio-visual analysis of musical performances. The dataset contains several annotated pieces of music with several instruments, collected from separately recorded performances of individual tracks.

http://www2.ece.rochester.edu/projects/air/projects/URMP.html

Lakh MIDI v0.1

The dataset contains 176,581 unique MIDI files, 45,129 of which are mapped to samples from the Million Song Dataset. The dataset is aimed at facilitating large-scale search for music information based on text and audio content.

https://colinraffel.com/projects/lmd/

Music21

Music21 contains musical performances from 21 categories and is aimed at solving research problems (for example, finding an answer to the question: “Which group used these chords for the first time?”)

More datasets are available here.