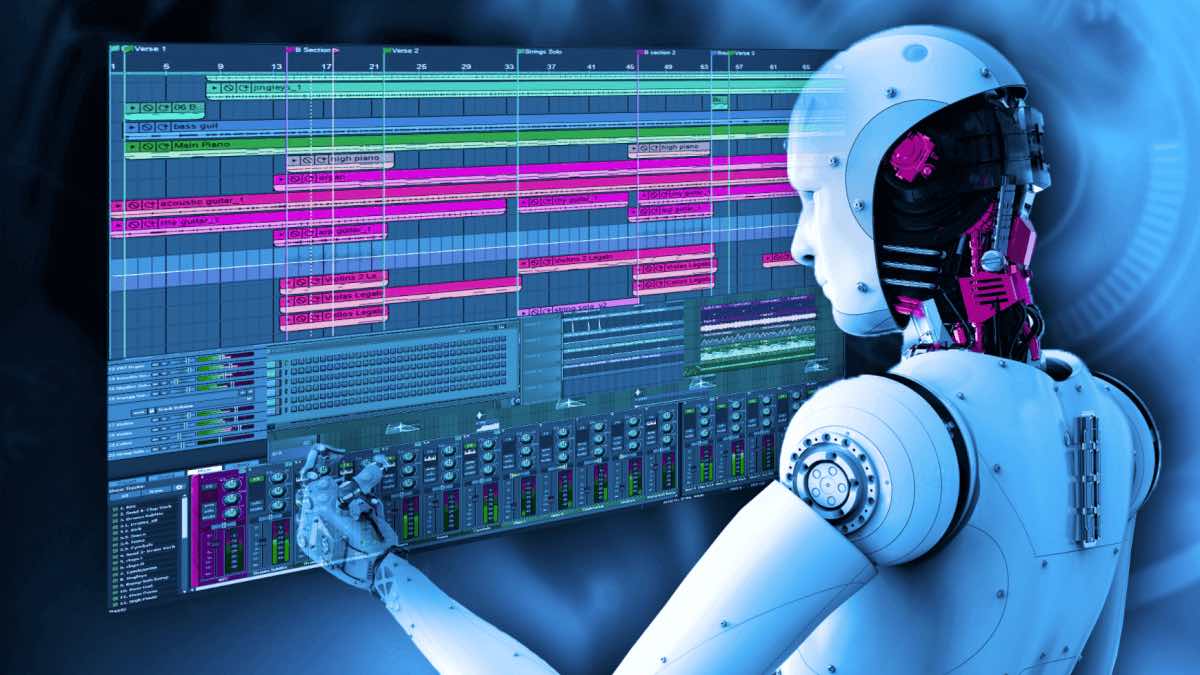

Magenta – an open source research project, started by GoogleAI has presented a new neural network for music generation called Music Transformer. According to Magenta’s blog post and pre-print paper published on arXiv, the new neural network model can generate long pieces of music with improved coherence.

Music Transformer is an attention-based neural network model. Moreover, it’s using an event-based representation to provide direct access to an earlier event and therefore generate more coherent music content.

The main idea behind this approach was to leverage the fact that music relies heavily on repetition to build structure and meaning. Inspired by The Transformer (Vaswani et al., 2017), a sequence model based on self-attention, Magenta proposed a transformer model that might also be adapted to modeling music.

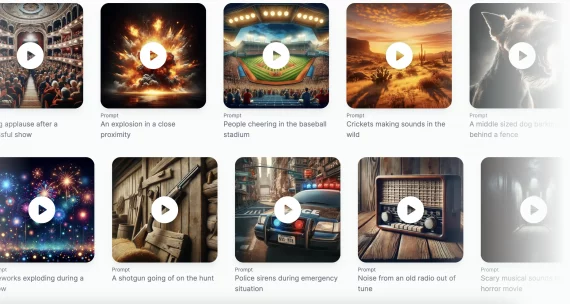

Some of the examples:

To come up with the final model, the researchers propose to use a Transformer model with relative attention. They found out that this kind of model can keep track of regularity that is based on relative distances, event orderings, and periodicity.

The whole method was tested using “J.S. Bach Chorales,” which is a canonical dataset used for evaluating generative models for music. Moreover, a qualitative evaluation was done comparing the perceived sample quality of the generated samples. The proposed Transformer method with relative attention showed outstanding results both on the test dataset and the human evaluation.

A thorough explanation can be found on Magenta’s page. Also, a comparison with PerformanceRNN, LSTM is given using some music samples. The paper is available at arXiv, and the code will be released soon.