Google Bard has undergone an update, expanding its functionality to 46 languages across more than 200 countries, including countries in Europe and Brazil. The latest features include image processing, dialog cataloging, and model response customization.

With the integration of the Google Lens platform, the updated Bard now supports multimodal queries. Users can submit both text-based tasks and images, and Bard will process them accordingly.

Notably, Bard’s responses are now audible, making it particularly valuable for language learners using the chatbot, as they can now hear the correct pronunciation. This audio feature is available for all supported Bard languages.

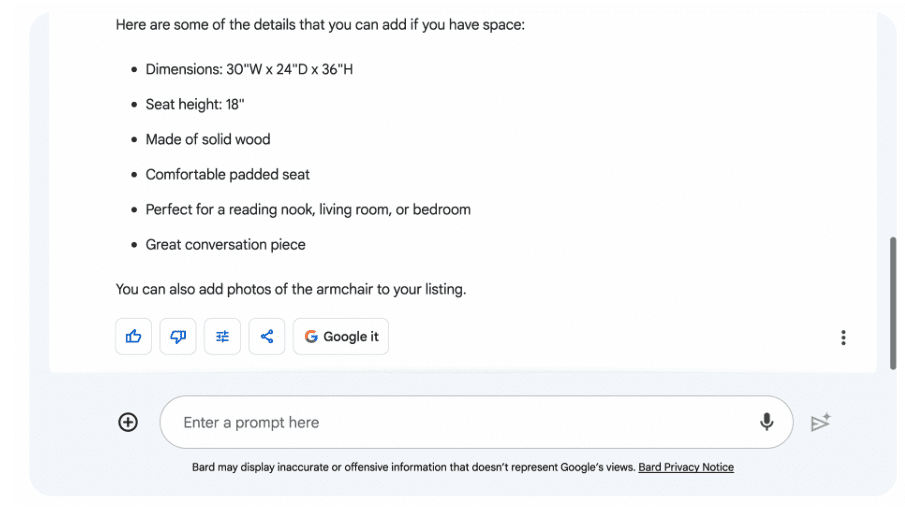

Users can now adjust the tone and style of Bard’s responses, choosing from five options: simple, lengthy, concise, professional, or casual. For instance, one can request Bard to compose a product advertisement and then condense the response using the provided drop-down list. This feature is currently available in English and will be extended to all languages in the future.

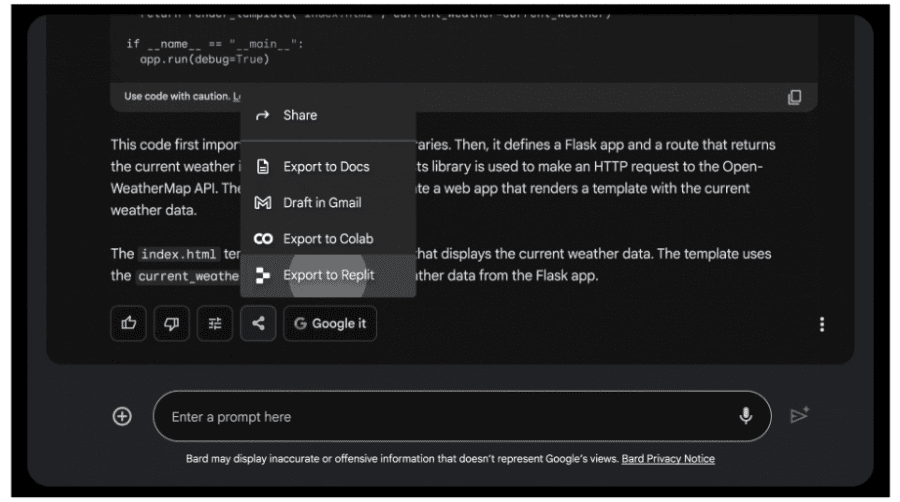

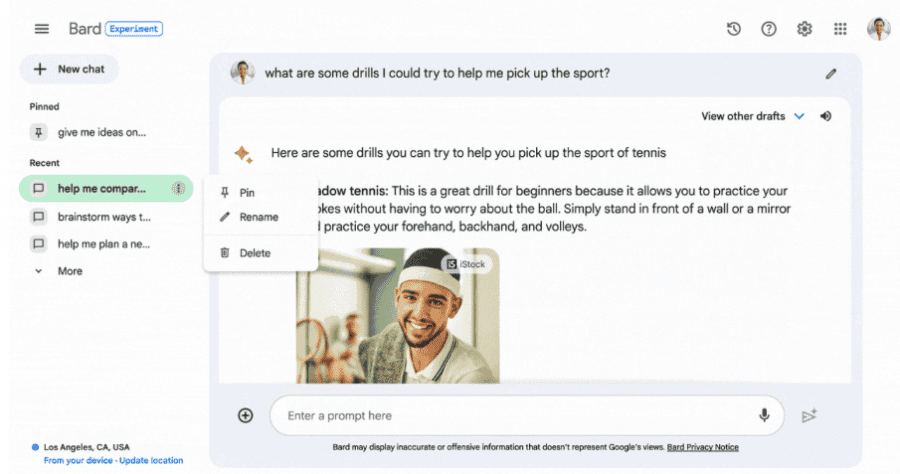

Furthermore, it is now possible to pin, rename, and review a list of recent dialogues with Bard, expanding the possibilities for extended interactions with the model. Additionally, users can export Python code to Replit, in addition to Google Colab, and share part or all of the dialogue with Bard through publicly accessible links.