How to Use AI Motion Control for Professional Video Results

29 May 2026

How to Use AI Motion Control for Professional Video Results

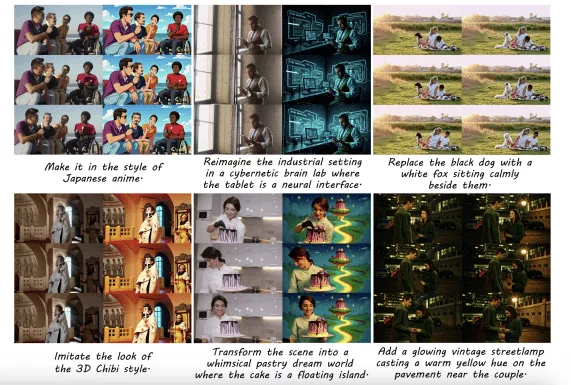

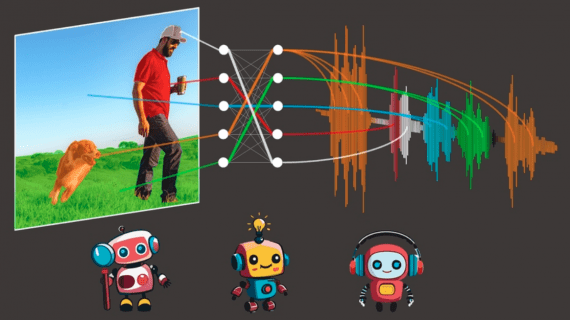

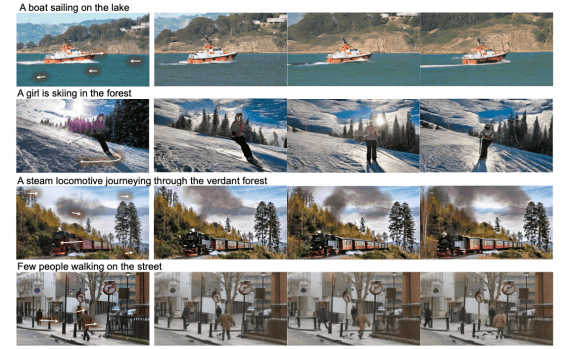

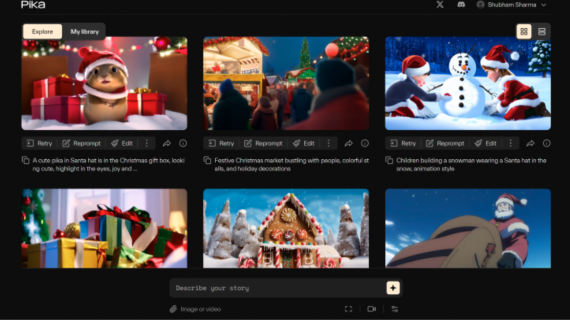

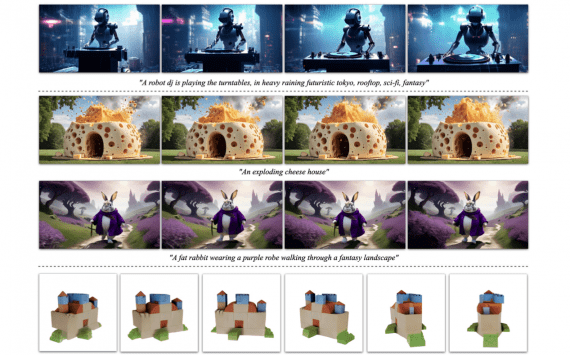

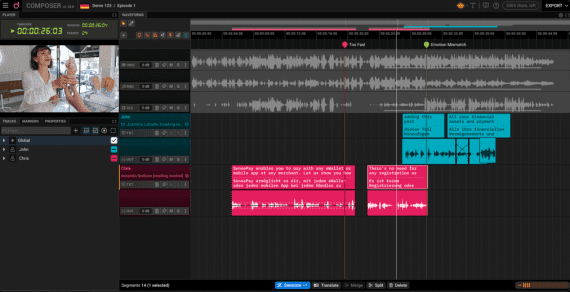

Video production has always demanded a careful balance between creative vision and technical execution. For years, achieving smooth, realistic motion in AI-generated video meant wrestling with inconsistent outputs, repeated generation…