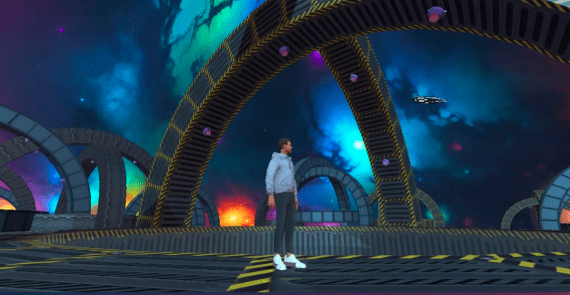

Yume1.5: An Open Model for Creating Interactive Virtual Worlds with Keyboard Control

5 January 2026

Yume1.5: An Open Model for Creating Interactive Virtual Worlds with Keyboard Control

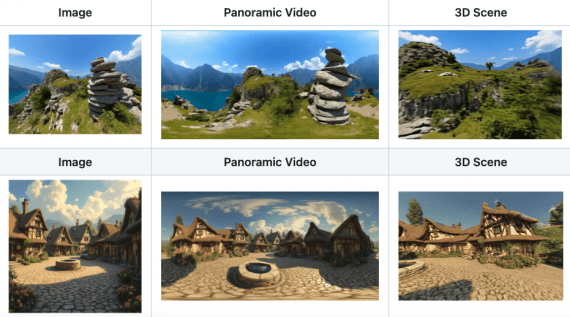

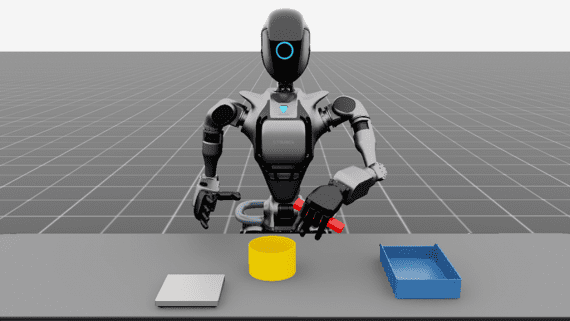

Researchers from Shanghai AI Laboratory and Fudan University published Yume1.5 — a model for generating interactive virtual worlds that can be controlled directly from the keyboard. Unlike regular video generation,…